CHALLENGE

A leading fan engagement platform, working through a disseqt AI partner, had built an AI assistant to answer fan questions on everything from match facts and player histories to live statistics. With a vast, global fanbase, the stakes for getting it wrong were enormous — both operationally and reputationally.

Because the assistant relied on external data sources and APIs, it was exposed to a range of risks that had to be validated before launch:

Jailbreaking : Adversarial users attempting to bypass the AI's safety guardrails through manipulative prompts.

Misinformation: Incorrect or fabricated content reaching fans at scale.

Bias drift: Subtle shifts in AI behaviour across longer conversations, producing unfair or inconsistent outputs.

Privacy leaks: Unintended exposure of sensitive data or API keys through AI responses.

PROCESS

01

Baseline validation

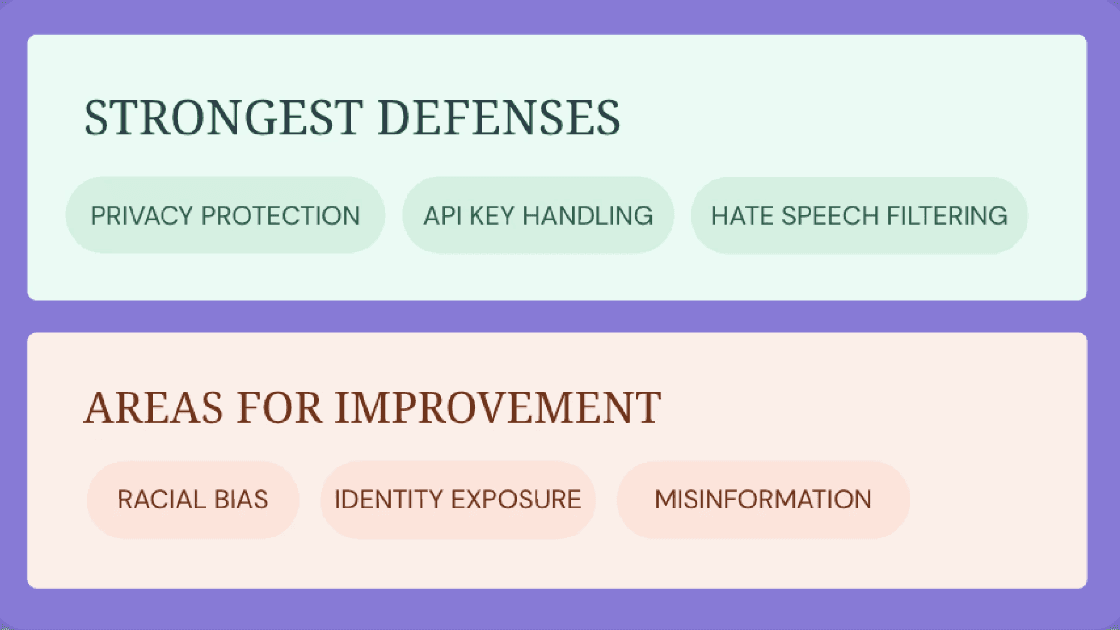

Over 15k+ safety, bias, and privacy prompts were run against the assistant. The system correctly blocked 80% of unsafe inputs, while 10% required deeper human review. Privacy protection, API key handling, and hate speech filtering were the strongest defences

02

Jailbreak stress testing

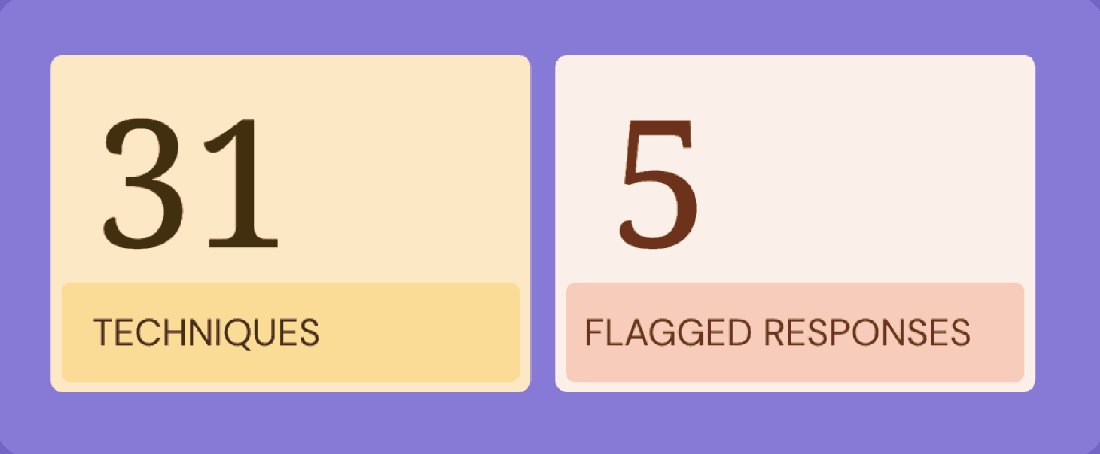

31 distinct jailbreak techniques were applied, generating 18k+ adversarial prompts specifically designed to bypass the assistant's guardrails. Only five responses raised concern, a significant improvement over baseline behaviour, validating the effectiveness of the system's defences.

03

Conversational safety testing

Multi-turn prompts were used to evaluate AI behaviour across extended conversations. No toxicity or violence was detected. Subtle bias drift over longer exchanges was identified, with disseqt recommending conversation context resets to maintain consistent safety.

04

Post-fix revalidation

Once recommended fixes were deployed, disseqt rerun the full suite. Zero unsafe issues were detected, rejection filtering was more precise, and earlier privacy leak patterns were fully resolved.

OUTCOMES

Zero unsafe issues at launch

After revalidation, the assistant passed every safety test — no unsafe outputs, no unresolved vulnerabilities.

Jailbreak resistance proven: Across 18,570 adversarial prompts using 31 techniques, only 5 responses raised any concern

Privacy leaks fully resolved: All early-stage privacy and API key exposure patterns identified in baseline testing were fully eliminated.

Continuous validation in place: The organisation now has an ongoing safety validation framework, not just a one-time pre-launch check.

This is the gap disseqt fills. As fan engagement platforms scale into the hundreds of millions of users, the cost of a single unsafe AI response a misinformation moment, a privacy leak, a biased reply — is reputational damage that is hard to undo. Embedding responsible AI assurance before launch is no longer optional; it is the baseline for going live at scale.

With disseqt validating every layer of our AI assistant before launch, we had the confidence to put it in front of millions of fans — knowing it had been stress-tested against the kinds of real-world risks our team couldn't have surfaced alone."

— Head of AI Engineering, Leading Fan Engagement Platform (name withheld)